Refactoring P¬P: Introducing SEN (Self-Evolving Networks)

Note:

On a broader note (before the technical one)

I just wanted to explore more the implications that this iteration had, how it connects to the previous blog post, and what I expect for the future of this development. The main thing that I left with intrigue is this line here:

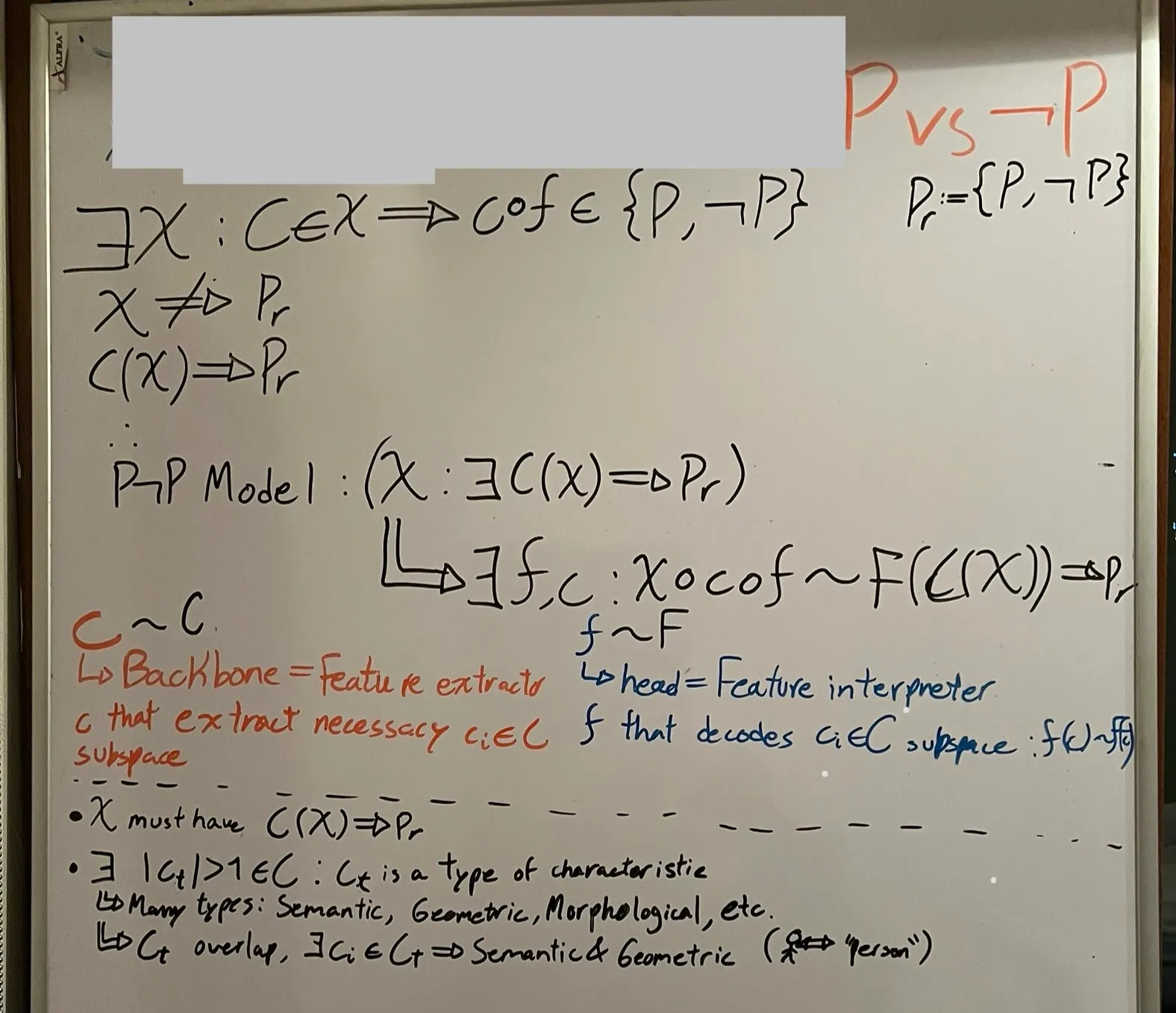

The P¬P problem can be decomposed into two phases: feature extraction and feature decoding / interpretation. This decomposition implies two things. First, there exists a set of extractable characteristics from that correlate with ; we denote this set as , the characteristic category, understood as a collection of characteristic types together with their realizations under . Second, there exist characteristic types such that each type admits multiple realizations (i.e., ). These types may be semantic, geometric, morphological, or otherwise.

If such characteristic types exist, then different realizations of the same type—possibly across modalities—must map to overlapping regions under the decoding or interpretation process. For example, the semantic characteristic corresponding to the concept “person” may be realized both by a word embedding and by visual features extracted from an image of a person, even though their raw representations live in different input spaces.

The reason why I say this is because if that is true, then has to be able to represent said overlap in such a way that the feature decoder can then map correctly. But the question is: how?

Well, here’s why I believe SEN must be the way forward.

SSL is fairly good extracting EVERY feature, the weakness is therefore exactly that: it maps everything, notices everything, and thus, due to many neurons working to note foreground / non-usable characteristics, a semantic segmentation or a classification task becomes very challenging or compute-heavy. This is, there must be a way to simply scale the model or prune it based on the needs of the model. The idea of applying SEN is that it cuts out the neurons that don’t correlate with and thus ’s job is easier since it doesn’t listen to inactive neurons or noisy neurons.

What was interesting, though, was the fact that in all iterations it reached the computational limit for growth. That is, it pruned at the start (cut out noisy or inactive neurons), and then grew as it needed new capabilities. The system showed clear signs that additional compute and width would be productively used, not wasted. This says that it simply needs more capability until (and here’s the sub-problem kicker) it can extract a sufficiently rich subset of such that .

In other words, just like there’s a need to cut out noisy neurons, there must exist cases where it actually needs more neurons to amplify the model’s level of abstraction.

The next step is simple though mathematically complex: amplifying SEN to both and . If we can do that, a model which entirely evolves, then I think that it would be the biggest step to solving the P¬P problem. Anyways, back to the whiteboard!

Technical section of iteration

This iteration focused on restructuring the P¬P segmentation pipeline to remove the assumption of fixed internal capacity. The core change was the integration of SEN (Self-Evolving Networks) into the P¬P backbone, enabling the model to dynamically prune and grow internal neuron groups during training.

Architectural changes

The original P¬P segmentation model used a fixed-width SSL backbone with a segmentation head. While effective, this design imposed a hard representational ceiling: once saturated, further optimization only produced diminishing returns.

The refactor introduced three key components:

1. Gated group structure

Selected internal layers (notably TokenProjection) were partitioned into groups of neurons, each controlled by a learnable scalar gate. Each group can be:

- Active (contributing to forward computation)

- Dormant (present but inactive)

- Pruned (frozen and excluded)

This transforms internal capacity into a controllable, discrete resource rather than a static design choice.

2. GateManager + edit loop

A centralized GateManager tracks:

- active group count (alive)

- dormant capacity

- per-group utility scores

- edit history and cooldowns

At fixed edit intervals, the model evaluates whether to:

- PRUNE low-utility groups

- GROW new groups from dormant capacity

- or do nothing

Edits are triggered by plateau detection on validation metrics, not by step-level gradients.

3. Semantic utility (SEN mode)

A new semantic utility mode was added alongside the original gradient-based utility.

For each gated group, semantic utility is computed as:

where:

- A is the group’s activation

- foreground/background are derived from the segmentation mask

- penalizes background correlation

This explicitly favors neurons whose activation implies the target predicate , not merely those that reduce loss indirectly.

Utility is computed over multiple validation batches, pooled, normalized, and used to rank groups for prune/grow decisions.

Training behavior and observations

Edit dynamics

With SEN enabled:

- The model performed real architectural edits (both prune and grow).

- Early training occasionally pruned low-utility groups.

- As training progressed, growth dominated.

This behavior was stable and repeatable.

Capacity saturation

Across runs, the model consistently expanded until reaching k_max, after which:

- further grow attempts were correctly skipped

- validation loss continued improving slowly

- no additional prune candidates emerged

This indicates that, under the current objective and dataset:

- all available groups contributed positively to semantic utility

- pruning further would harm validation performance

In other words, the model was capacity-limited, not optimization-limited.

Compute ceiling

Two constraints became apparent:

- Architectural ceiling

Even with dynamic pruning, the model converged to maximal internal capacity, implying that the representational needs exceeded the original backbone width.

- Compute ceiling

Kaggle-scale compute restricted:

- input resolution

- batch size

- edit horizon

- depth of gated layers

The system showed clear signs that additional compute and width would be productively used, not wasted.

What this refactor achieved (technically)

- Removed the assumption of a fixed optimal architecture

- Enabled per-neuron-group survival based on semantic alignment

- Demonstrated that P¬P naturally pushes toward higher capacity when allowed

- Distinguished between:

- neurons that are active

- neurons that are useful

- neurons that imply

Technical takeaway

The experiments show that P¬P segmentation is not bottlenecked by loss design or optimization stability. It is bottlenecked by semantic capacity.

SEN does not merely prune unused neurons; it exposes when the model has exhausted all semantically meaningful capacity. When given the option, the network consistently chose to grow until hitting its structural and compute limits.

This confirms that the correct next step is scaling combined with self-evolution, not static architecture tuning.